DataSet

Method

Analysis

GitHub Repo

|

|

|

|

|

|

|

|

|

|

|

|

DataSet |

Method |

Analysis |

GitHub Repo |

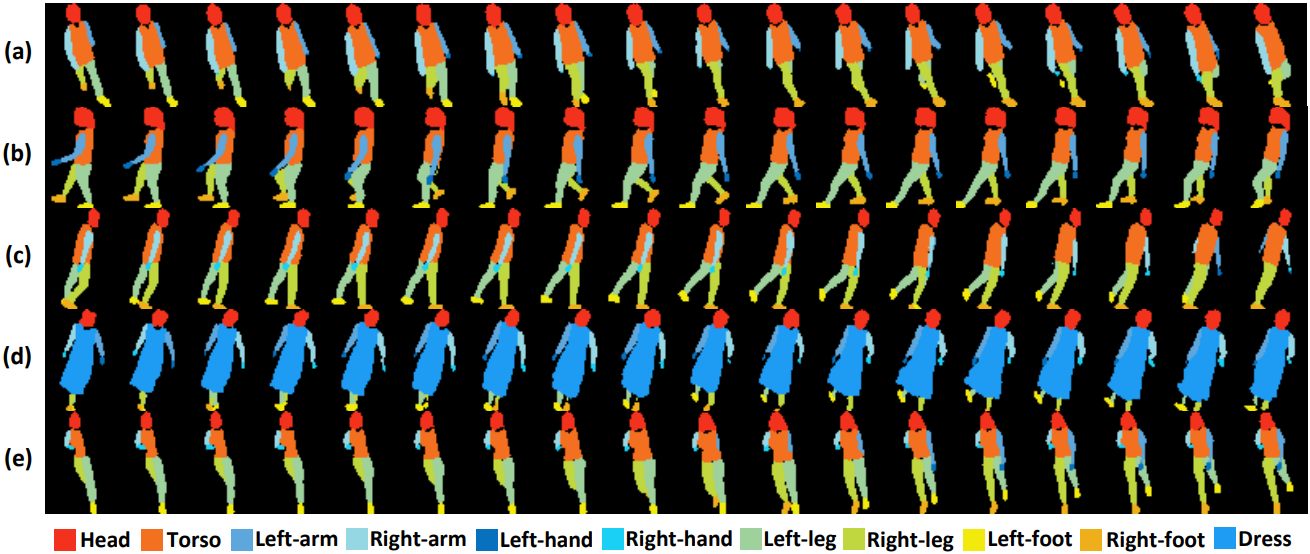

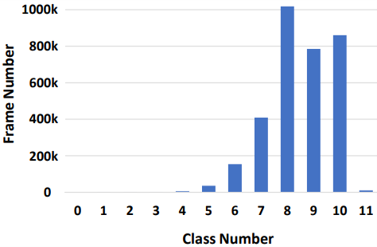

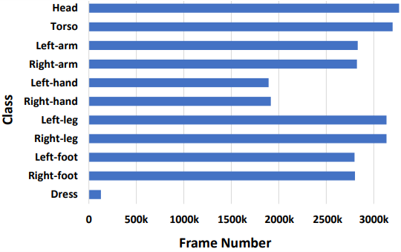

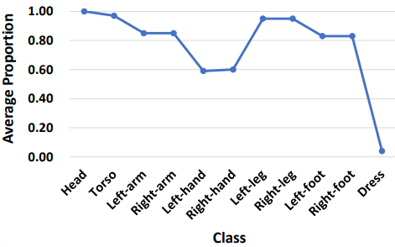

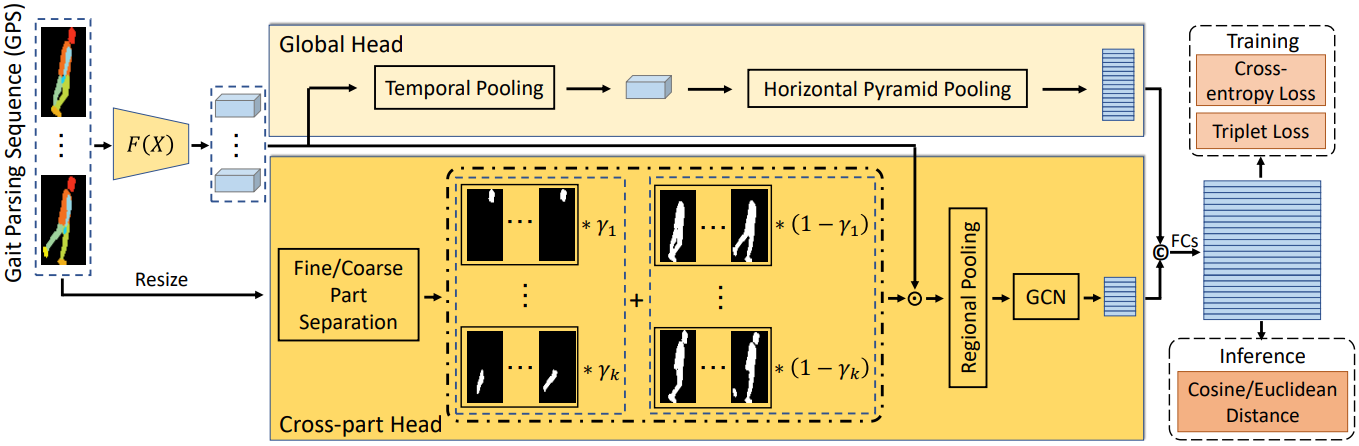

Binary silhouettes and keypoint-based skeletons have dominated human gait recognition studies for decades since they are easy to extract from video frames. Despite their success in gait recognition for in-the-lab environments, they usually fail in real-world scenarios due to their low information entropy for gait representations. To achieve accurate gait recognition in the wild, this paper presents a novel gait representation, named Gait Parsing Sequence (GPS). GPSs are sequences of fine-grained human segmentation, i.e., human parsing, extracted from video frames, so they have much higher information entropy to encode the shapes and dynamics of fine-grained human parts during walking. Moreover, to effectively explore the capability of the GPS representation, we propose a novel human parsing-based gait recognition framework, named ParsingGait. ParsingGait contains a Convolutional Neural Network (CNN)-based backbone and two light-weighted heads. The first head extracts global semantic features from GPSs, while the other one learns mutual information of part-level features through Graph Convolutional Networks to model the detailed dynamics of human walking. Furthermore, due to the lack of suitable datasets, we build the first parsing-based dataset for gait recognition in the wild, named Gait3D-Parsing, by extending the large-scale and challenging Gait3D dataset. Based on Gait3D-Parsing, we comprehensively evaluate our method and existing gait recognition methods. Specifically, ParsingGait achieves a 17.5% Rank-1 increase compared with the state-of-the-art silhouette-based method. In addition, by replacing silhouettes with GPSs, current gait recognition methods achieve about 12.5% ∼ 19.2% improvements in Rank-1 accuracy. The experimental results show a significant improvement in accuracy brought by the GPS representation and the superiority of ParsingGait.

|

|

|

|

|

|

|

|

|

Zheng, Liu, Wang, Wang, Yan, Liu. Parsing is All You Need for Accurate Gait Recognition in the Wild In ACM MM, 2023. (arXiv) |

|

(Supplementary)

|

Acknowledgements

This work was supported by the National Key Research and Development Program of China under Grant (2020YFB1406604), Beijing

Nova Program (20220484063), National Nature Science Foundation of China (61931008, U21B2024), "Pioneer", Zhejiang Provincial

Natural Science Foundation of China (LDT23F01011F01). |

Contact |